Why Great Content Still Dies on Broken Pages

Most teams miss the real problem. AnSEO audit tooloften shows why strong content stalls before Google ever tests it. Traffic stays flat, rankings drift, and teams answer with more posts. That is backwards. According to This Page is Designed to Last: A Manifesto for Preserving Content on the Web, web content can disappear or degrade within 7 years. Research from Perma.cc at Harvard Law School documents that over 50% of links in Supreme Court opinions and legal scholarship suffer from link rot.

We built Mygomseo to catch technical friction before content goes live. Our audit-driven workflow helps growing teams find hidden blockers fast.

This matters because publishing more will not fix weak indexing, broken links, or slow pages. We will show what suppresses performance, why checks come first, and how we catch issues early.

Why Technical SEO Silently Kills Content ROI

The current state of content production

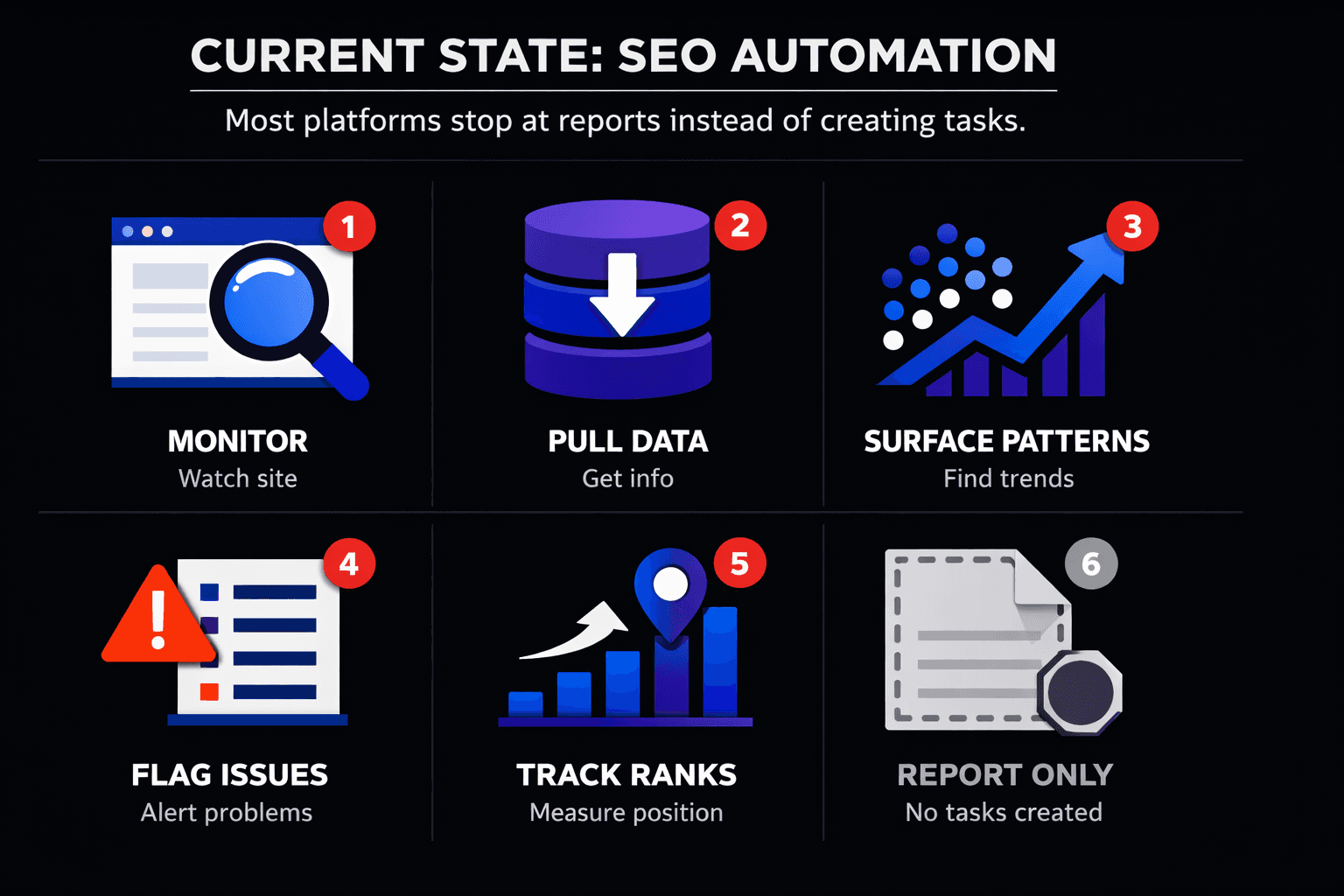

Most teams still run content and technical SEO on separate tracks. Writers ship articles. Developers fix issues later. Leadership then judges results by output, not by whether pages could actually perform. That split is why a strong article often gets labeled a failure too early.

We see this pattern everywhere. A team publishes fast, celebrates volume, then wonders why rankings stall. The answer is often not the topic or the writing. It is indexability, weak internal linking, poor page structure, or slow templates hurting Core Web Vitals. A good SEO audit tool helps surface those blockers before they distort the verdict on content.

For example, we once had 47 browser tabs open, deep into a review, and still felt unsure. The draft was solid. The search intent matched. But the page sat three clicks deep, loaded slowly on mobile, and lacked clear heading structure. The content was not the miss. The system around it was.

Why more publishing rarely solves weak performance

When a page underperforms, most teams respond with more production. We think that instinct is backwards. If technical friction exists, publishing more only scales waste. It creates more URLs that compete for crawl attention, inherit weak templates, and fail the same way.

This is the real answer to a common question: why does good content not rank on Google? Because Google cannot reward what it cannot access, trust, or interpret cleanly. Strong writing still depends on technical SEO and on page SEO basics working together.

For a visual walkthrough of this process, check out this tutorial from Google Search Central:

If your team treats audits as a cleanup step, you will keep drawing false conclusions. That is why we keep pushing pre-publish checks and simpler workflows, as we explain in SEO Audit Tool Feature Creep: Which Checks Actually Matter?.

The hidden costs of technical neglect

Technical neglect rarely announces itself with a dramatic crash. It shows up as softer losses. Impressions plateau. Clicks slip. Conversions weaken. Then teams blame the content calendar.

Research from Pew Research Center found that 20% of webpages contain at least one broken link. According to Pew Research Center, 25% of webpages that existed between 2013 and 2023 are no longer accessible as of October 2023. The same study found that 10% of tweets from U.S. members of Congress disappear within the first week of posting. Different format, same lesson: digital assets decay fast when nobody maintains the infrastructure.

So, can technical SEO affect content performance? Absolutely. In our view, content strategy without pre-publish technical checks is incomplete and increasingly expensive. Leaders should stop treating technical fixes as backlog work and start treating them as a publishing requirement.

Why Our SEO Audit Tool Starts Before Publishing

Our perspective on pre publish SEO

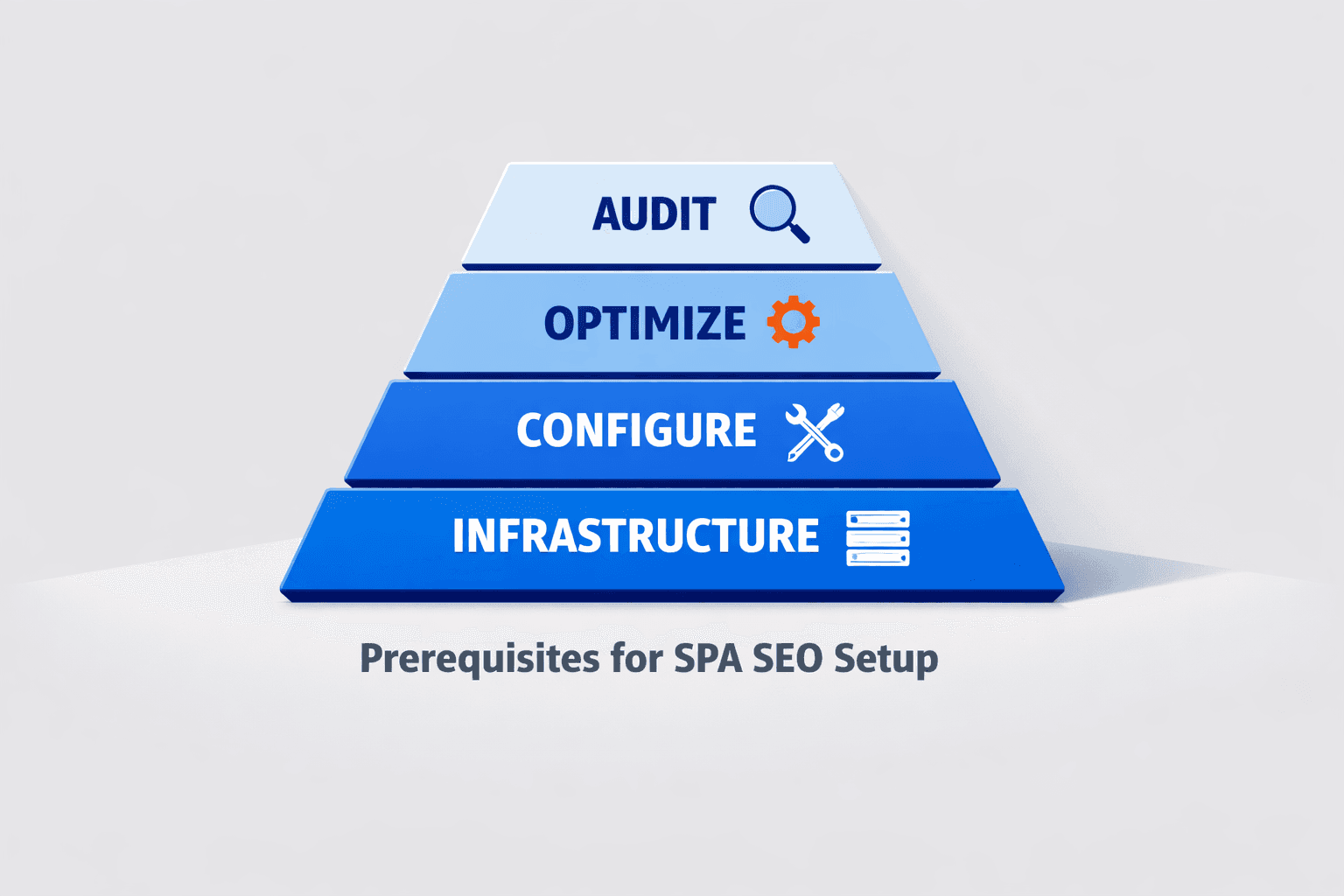

We do not use an SEO audit tool as a cleanup report. We use it as a publishing gate. That shift matters more than most teams admit. If content enters a weak environment, even strong writing can look like a failed strategy.

The conventional playbook says publish first, then audit later. We think that is backwards. A page should face technical SEO, on page SEO, and performance checks before it goes live. That is how we protect content investment instead of explaining losses after the fact.

We learned this the hard way. One review session still stands out. The draft was strong. The search intent was clear. Then we checked the page setup and found weak internal links, missing metadata, and media that dragged Core Web Vitals down. Nothing looked broken in the doc. Everything was fragile in production.

That is why we ask a simple question before launch: what could bury this page before Google even judges the content fairly?

What we built and why we built it

We built our workflow because too many teams were wasting budget on articles that never had a fair shot. The problem was not always the brief. It was the environment around the page. A good post would go live with preventable issues, then get labeled a content miss.

What should an SEO audit tool check before publishing? It should check crawl basics, index signals, canonicals, internal links, metadata, heading structure, image weight, and template issues that can hurt speed. It should also catch page elements that weaken clarity or relevance before those problems hit performance.

This matters because the web decays fast. According to At Least 66.5% of Links to Sites in the Last 9 Years Are Dead (Ahrefs Study on Link Rot), 30% of social media links decay within two years. Research from Link Rot and Digital Decay on Government, News and Other Webpages | Pew Research Center shows 10% of tweets disappear within a week. We've seen broken digital paths multiply fast - one analysis found that a single broken link can create a 4x effect in exposure loss (source). We do not wait for that decay to show up in reports.

If you want the deeper version of this argument, our take on SEO Audit Tool Feature Creep: Which Checks Actually Matter? explains why fewer, sharper checks often win.

How our workflow fits content teams

When should you run an SEO audit on new content? Before publishing, not after indexing stalls. We run checks when the draft is nearly final and again before release. That timing gives teams room to fix real issues without slowing production.

Our workflow fits how content teams already work. Writers finish the draft. Editors review message and structure. Then the page passes through one final gate that checks the live environment, not just the words on the page. That keeps responsibility clear and prevents last minute guesswork.

We are not trying to create more process. We are trying to remove silent blockers early. Leaders should stop treating audits as post mortems and start using them to help content compete on merit.

How Core Web Vitals and On Page SEO Distort Results

The signals we check first

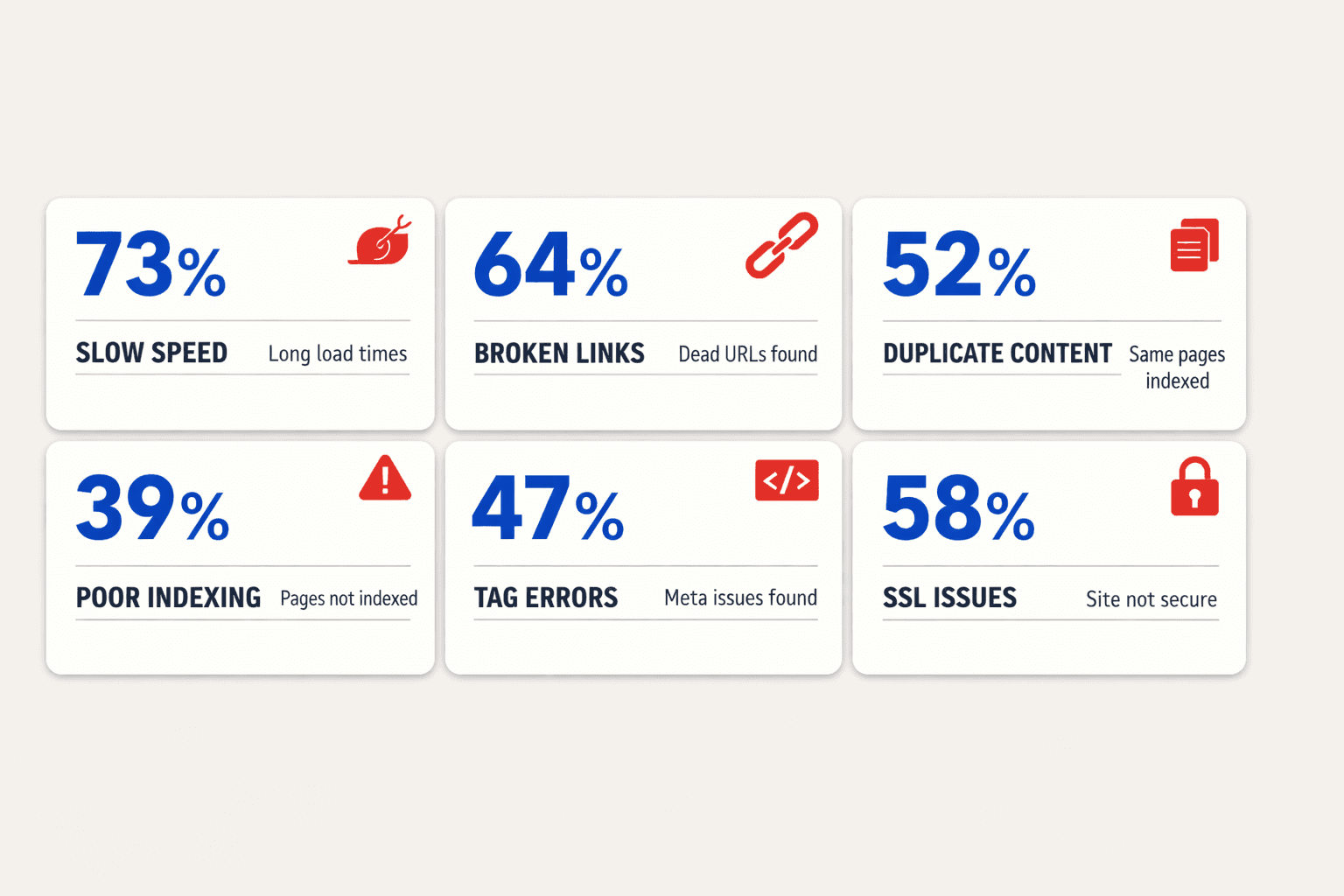

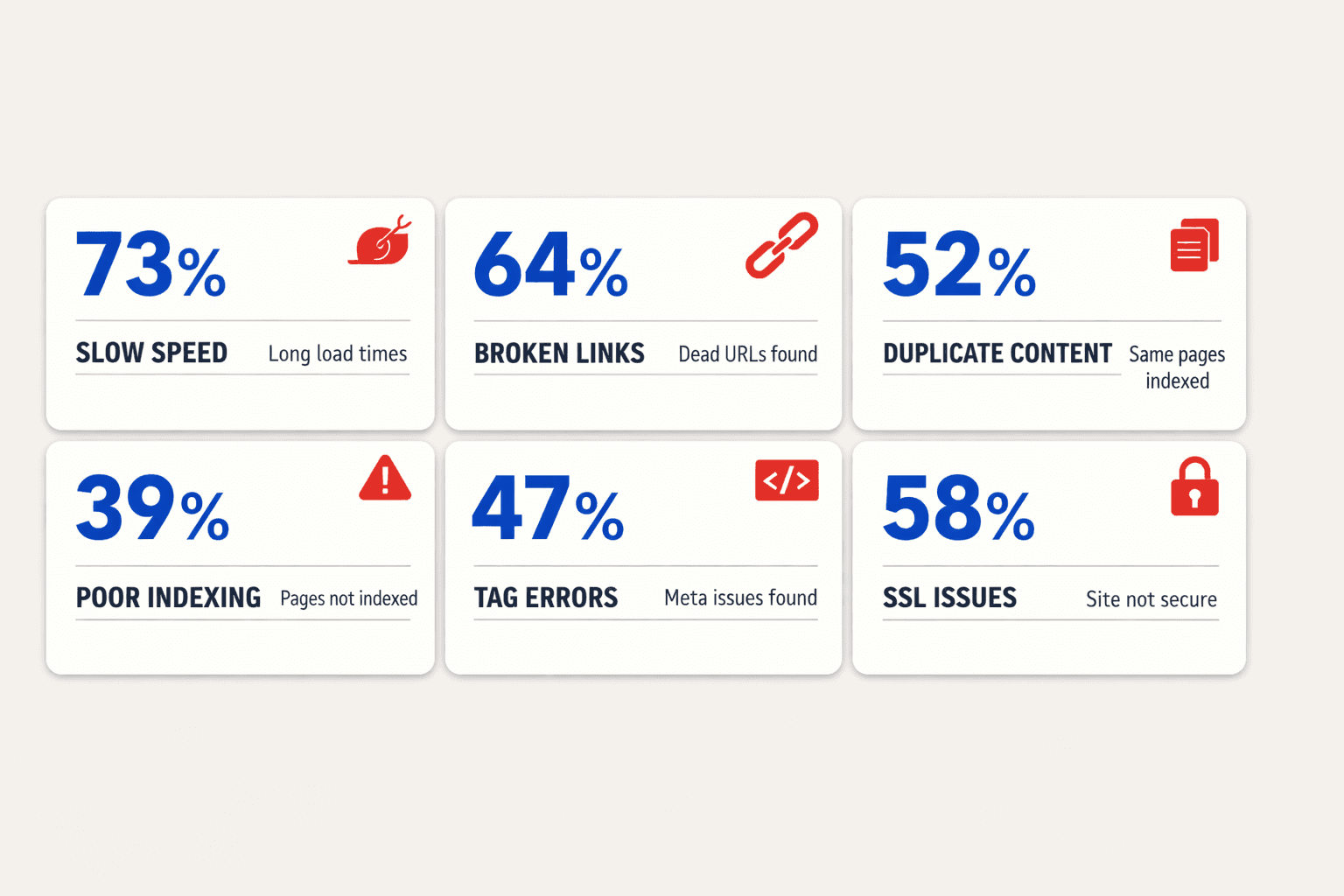

We check render delays first because they hide quality. When above-the-fold content loads late, users bounce before the article proves its value. Poor image handling, heavy scripts, and unstable layouts turn good writing into a slow first impression. Core Web Vitals matter because they shape access to the content, not just the content itself.

Next, we look at on page SEO basics that quietly distort rankings. Thin internal context leaves pages isolated. Duplicate metadata blurs intent across URLs. Broken heading structure weakens topical clarity for both users and crawlers. Inconsistent canonicals create even worse confusion, especially on sites with reused templates and tag logic.

The biggest ranking killers are rarely dramatic. We usually find bloated templates, weak internal linking paths, missing hierarchy, and pages that compete against themselves. A solid SEO audit tool should flag those patterns before a team writes another article. If it does not, it is measuring the wrong risks.

What our client results showed

In client work, we kept seeing the same pattern. Teams assumed a topic failed because traffic stalled. Then we fixed crawl waste, cleaned metadata, improved image delivery, and tightened page structure. Rankings often stabilized faster than they did after publishing another content batch.

That does not mean content quality matters less. It means quality cannot overcome recurring technical friction at scale. If a site sends mixed signals, even strong pages fight unnecessary resistance. We would rather remove that resistance first than celebrate another draft in the queue.

For example, I remember one review with 47 browser tabs open. The article itself was sharp. The headline worked. The examples were strong. But the page loaded through a bulky template, the canonical pointed elsewhere, and the H2 flow skipped key context. It did not need a rewrite. It needed a rescue.

Real examples of silent suppression

Silent suppression usually looks boring. That is why teams miss it. A page gets indexed, but not crawled often enough. Another page ranks briefly, then slips because duplicate titles spread relevance across similar URLs. Another earns visits, but engagement drops because the layout shifts as images load late.

This is where technical detail matters. Render delays reduce patience. Bloated templates dilute focus. Weak on page SEO lowers clarity. Inconsistent canonicals split authority. Each issue seems small alone. Together, they reduce the odds of success before the topic gets a fair test.

Some will argue that great content should still win. We think that misses the point. Search performance is not a purity contest. It is an environment test. Leaders should stop treating technical fixes as cleanup and start treating them as leverage. If you want a deeper view, read Why Your Technical SEO Audit Should Start With HTTPS (Not Content).

The Teams That Win Will Publish Smarter

We believe the next edge in search will not come from teams that simply produce more. It will come from teams that connect content operations with lightweight technical QA before launch. That shift is bigger than process hygiene. It changes how leaders protect budget, how teams set priorities, and how performance gets interpreted. When pages are checked before they go live, content earns a fair chance to rank on its actual value.

This is the operational change leaders need to make now. Stop treating output as the main signal of success. Start tracking whether pages are ready to compete. That means watching indexability, page health, crawl access, template stability, and Core Web Vitals alongside editorial delivery. It also means using an SEO audit tool before publication, not weeks later when traffic stalls and teams start guessing.

Some teams will argue that this slows production. We think that view is outdated. Small technical checks upstream prevent larger losses downstream. They reduce avoidable rework. They protect internal resources. They help teams spend time improving pages that can perform, instead of explaining why strong articles never took off. In practice, that is how mature teams move faster.

Our prediction is clear. The teams that build an SEO audit tool into publishing will outperform teams that treat it as a rescue step after decline. They will spot blockers earlier, launch with more confidence, and get more return from every article they approve. That is the real shift ahead: fewer blind launches, fewer false negatives, and stronger content performance because the technical groundwork was handled first.

If your team is still measuring content success by how much it ships, it is time to raise the bar. Measure readiness, not just output - and if you want to see how that shift can work in practice, Learn More.